Running AI on your own hardware has never been more powerful or more accessible.

Selecting the right hardware can be a headache because LLM needs differ from gaming specs.

Here’s what you actually need to know to make the right choice.

Why Run AI Locally?

The case for local AI has never been stronger. You get complete privacy (no data leaving your network), zero per-query fees, offline access, and full control over which models you run. Open-weight models like Meta’s Llama, Google’s Gemma 4, Mistral, and Alibaba’s Qwen have crossed a critical threshold. They are good enough for real work, and they run on hardware you can buy today.

The question is: which hardware should you buy?

This guide breaks down the best local AI machines by budget and use case, from compact mini PCs to full desktop workstations.

The Golden Rule: Memory Is Everything

Before diving into specific machines, understand the single most important rule of local AI: the bottleneck is almost always memory, not processor speed. Whether it’s VRAM on a GPU, or unified memory on an Apple Silicon Mac, the amount of RAM you have determines which models you can run and how fast they respond.

As a rule of thumb:

- 16 GB RAM — small models only (3B–7B parameters), limited headroom

- 32 GB RAM — practical minimum for serious use; runs 7B–13B models comfortably

- 64 GB RAM — runs 30B–34B models at conversational speed

- 128 GB+ RAM — 70B models fully in memory

The price difference between 16 GB and 32 GB configurations is often under $100. Do not skimp here.

Tier 1: Budget Mini PC (~$300–$500)

Best for: Beginners, always-on home AI servers, privacy-focused users

A dedicated mini PC is the smartest entry point for most people. It sits quietly on a shelf, sips around 15–25 watts under load, and costs less per year to run than two months of ChatGPT Plus.

Top Pick: Beelink EQR6 (~$389, 32 GB RAM)

This machine runs 7B models 24/7 at near-silent operation. Tools like Ollama and LM Studio work out of the box, and the machine can serve models to every device on your home network — your laptop, phone, tablet, all powered by one local AI brain.

What to look for at this tier:

- AMD Ryzen processors with Radeon integrated graphics outperform Intel for AI inference

- 32 GB of DDR5 RAM is the target — 16 GB will frustrate you quickly

- Dual 2.5G LAN ports are a bonus for serving models across your network

Performance: Expect 10–20 tokens per second on a 7B model — fast enough for real-time conversation.

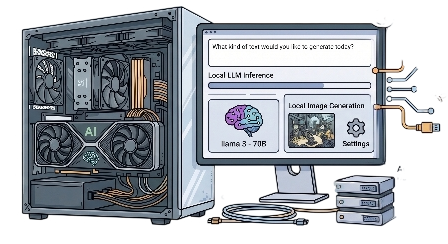

Tier 2: Mid-Range GPU Desktop (~$900–$1,100)

Best for: Developers, power users, coding assistants, image generation

This is where local AI gets genuinely powerful. Adding a dedicated GPU with 12–24 GB of VRAM opens up the 7B–34B model range at speeds that feel instant.

Top Pick: Custom AMD Ryzen 7 build with RTX 4070 (~$900–$1,100)

An AMD Ryzen 7 7700 paired with an NVIDIA RTX 4070 (12 GB VRAM) is the most recommended starter GPU build in 2026. It handles quantized 7B and 13B models with ease, doubles as a capable gaming machine, and gives you headroom to grow.

Why NVIDIA still wins: NVIDIA’s CUDA ecosystem is supported by virtually every AI framework. AMD’s ROCm platform has matured significantly and is now a genuine option — especially the RX 7900 XTX with 24 GB VRAM — but for a first build, NVIDIA offers a smoother experience.

Performance: 20–35 tokens per second on 13B models. Strong enough for coding assistants, document summarization, and general chat.

Tier 3: The Sweet Spot — Apple Mac Mini M4 Pro (~$1,299–$1,999)

Best for: Most users who want the best balance of power, simplicity, and silence

Apple Silicon has emerged as the local AI sweet spot in 2026. The reason is unified memory architecture: all of your RAM is available for model loading, unlike a PC where you’re limited by separate GPU VRAM. The machine runs nearly silent and uses around 65 watts under full AI load.

Top Pick: Mac Mini M4 Pro (24–64 GB unified memory)

A Mac Mini M4 Pro with 48 GB of unified memory runs 30B-class models at 12–18 tokens per second — that’s real-time chat speed. The 64 GB configuration can push even further. If you’re already in the Apple ecosystem and prioritize a “just works” setup over Linux flexibility, this is arguably the best consumer option available.

Performance snapshot:

- 24 GB config: handles 7B–13B models effortlessly

- 48 GB config: 30B models at real-time chat speed

- 64 GB config: headroom for 34B and partial 70B model loading

The tradeoff: macOS gives you fewer options for headless server deployment and Docker-based network isolation compared to Linux. If infrastructure flexibility matters, consider a high-end AMD mini PC running Ubuntu instead.

Tier 4: High-End GPU Workstation (~$2,000–$3,000)

Best for: Researchers, developers running 70B+ models, image and video generation

At this tier, the RTX 4090 with 24 GB of VRAM becomes your primary tool. It handles full-precision inference on 13B models, comfortable quantized runs on 34B models, and can push 70B models with partial CPU offloading.

Top Pick: Custom build with NVIDIA RTX 4090

The 4090 delivers roughly 50+ tokens per second on 70B models with quantization, thanks to its 1,008 GB/s memory bandwidth. It also runs image generation models (Stable Diffusion, FLUX) at serious speed — a full 1024×1024 image in seconds.

Alternative: Mac Studio M4 Max (64–128 GB unified memory, from ~$2,599)

For those who prefer Apple Silicon, the Mac Studio with 64 GB or more of unified memory can run 70B models entirely in memory. It’s slower per token than a dedicated GPU but offers silent operation, minimal power draw, and effortless setup.

Tier 5: Professional Powerhouse (~$3,000+)

Best for: AI researchers, fine-tuning, multi-model workloads

At the top end, options include dual-GPU workstations (two RTX 3090s or 4090s), the NVIDIA DGX Spark, or an AMD Ryzen AI Max+ 395 mini PC with 128 GB of unified memory. The AMD Ryzen AI Max+ 395 chip has garnered significant attention in 2026 for being able to run a 120-billion-parameter model in a portable form factor — at roughly half the price of the DGX Spark.

For pure local LLM text workflows, the AMD AI Max+ 395 is the performance-per-dollar leader at this tier. For mixed workloads (image generation, video, fine-tuning), a dual-GPU NVIDIA rig still has an edge due to CUDA ecosystem maturity.

Quick Comparison

| Tier | Budget | Best Pick | Max Model Size | Speed (30B model) |

|---|---|---|---|---|

| Budget Mini PC | ~$400 | Beelink EQR6 (32 GB) | 13B | ~10 tok/s |

| Mid-Range Desktop | ~$1,000 | Ryzen 7 + RTX 4070 | 13B (fast) | ~25 tok/s |

| Apple Compact | ~$1,600 | Mac Mini M4 Pro 48 GB | 34B | ~15 tok/s |

| High-End Desktop | ~$2,500 | Custom RTX 4090 | 70B (offload) | ~50 tok/s |

| Pro Workstation | $3,000+ | AMD AI Max+ 395 / Dual GPU | 120B+ | Varies |

Key Tips Before You Buy

Don’t obsess over NPUs. Intel and AMD processors in 2026 advertise 40–86 TOPS from their Neural Processing Units, but as of now, mainstream tools like Ollama, llama.cpp, and LM Studio do not use the NPU for LLM inference. It benefits video calls and background tasks — not your local AI workflow. Don’t pay a premium for NPU specs.

Always use quantized models. Q4_K_M and Q5_K_M quantization formats are nearly indistinguishable in quality from full-precision models for chat, coding, and summarization tasks. A 30B model at Q4_K_M needs ~18 GB of RAM instead of ~60 GB. Always start quantized.

Consider Linux for desktops. Ubuntu gives you the best flexibility: full CUDA and ROCm support, easy Docker deployments, and the widest framework compatibility. macOS is excellent on Apple Silicon. Windows works fine but adds friction for some tools.

One machine can serve your whole home. Tools like Ollama expose a local API that any device on your network can access. Run the model on one dedicated machine and query it from your laptop, phone, or tablet.

The Bottom Line

For most people, the Mac Mini M4 Pro (48 GB) or a custom Ryzen 7 + RTX 4070 desktop represent the best value in 2026. Both give you real-time conversation speed with 30B-class models, private data, no monthly fees, and hardware that will stay relevant for years. Start with 32 GB of RAM as your absolute floor — everything above that is headroom for bigger, better models as the open-weight ecosystem continues to evolve.

Local AI is no longer a compromise. For many workflows, it’s now the better default.